Chapter 1. Installing OpenStack with Ansible

In this chapter, we will cover the following topics:

- Introduction – the OpenStack architecture

- Host network configuration

- Root SSH keys configuration

- Installing Ansible, playbooks, and dependencies

- Configuring the installation

- Running the OpenStack-Ansible playbooks

- Troubleshooting the installation

- Manually testing the installation

- Modifying the OpenStack configuration

- Virtual lab – vagrant up!

Introduction – the OpenStack architecture

OpenStack is a suite of projects that combine into a software-defined environment to be consumed using cloud friendly tools and techniques. The popular open source software allows users to easily consume compute, network, and storage resources that have been traditionally controlled by disparate methods and tools by various teams in IT departments, big and small. While consistency of APIs can be achieved between versions of OpenStack, an administrator is free to choose which features of OpenStack to install, and as such there is no single method or architecture to install the software. This flexibility can lead to confusion when choosing how to deploy OpenStack. That said, it is universally agreed that the services that the end users interact with—the OpenStack services, supporting software (such as the databases), and APIs—must be highly available.

A very popular method for installing OpenStack is the OpenStack-Ansible project (https://github.com/openstack/openstack-ansible). This method of installation allows an administrator to define highly available controllers together with arrays of compute and storage, and through the use of Ansible, deploy OpenStack in a very consistent way with a small amount of dependencies. Ansible is a tool that allows for system configuration and management that operates over standard SSH connections. Ansible itself has very few dependencies, and as it uses SSH to communicate, most Linux distributions and networks are well-catered for when it comes to using this tool. It is also very popular with many system administrators around the globe, so installing OpenStack on top of what they already know lowers the barrier to entry for setting up a cloud environment for their enterprise users.

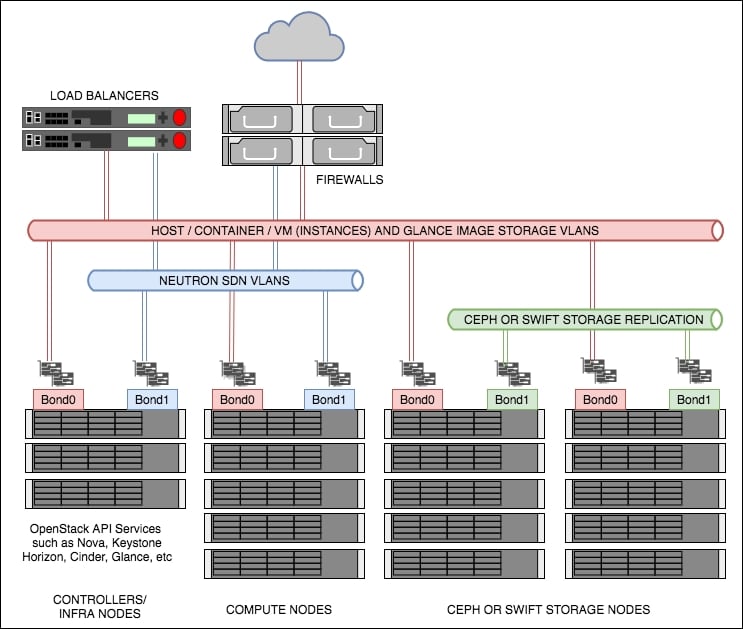

OpenStack can be architected in any number of ways; OpenStack-Ansible doesn’t address the architecture problem directly: users are free to define any number of controller services (such as Horizon, Neutron Server, Nova Server, and MySQL). Through experience at Rackspace and feedback from users, a popular architecture is defined, which is shown here:

Figure 1: Recommended OpenStack architecture used in this book

As shown in the preceding diagram (Figure 1), there are a few concepts to first understand. These are described as follows.

Controllers

The controllers (also referred to as infrastructure or infra nodes) run the heart of the OpenStack services and are the only servers exposed (via load balanced pools) to your end users. The controllers run the API services, such as Nova API, Keystone API, and Neutron API, as well as the core supporting services such as MariaDB for the database required to run OpenStack, and RabbitMQ for messaging. It is this reason why, in a production setting, these servers are set up as highly available as required. This means that these are deployed as clusters behind (highly available) load balancers, starting with a minimum of 3 in the cluster. Using odd numbers starting from 3 allows clusters to lose a single server without and affecting service and still remain with quorum (minimum numbers of votes needed). This means that when the unhealthy server comes back online, the data can be replicated from the remaining 2 servers (which are, between them, consistent), thus ensuring data consistency.

Networking is recommended to be highly resilient, so ensure that Linux has been configured to bond or aggregate the network interfaces so that in the event of a faulty switch port, or broken cable, your services remain available. An example networking configuration for Ubuntu can be found in Appendix A.

Computes

These are the servers that run the hypervisor or container service that OpenStack schedules workloads to when a user requests a Nova resource (such as a virtual machine). These are not too dissimilar to hosts running a hypervisor, such as ESXi or Hyper-V, and OpenStack Compute servers can be configured in a very similar way, optionally using shared storage. However, most installations of OpenStack forgo the need for the use of shared storage in the architecture. This small detail of not using shared storage, which implies the virtual machines run from the hard disks of the compute host itself, can have a large impact on the users of your OpenStack environment when it comes to discussing the resiliency of the applications in that environment. An environment set up like this pushes most of the responsibility for application uptime to developers, which gives the greatest flexibility of a long-term cloud strategy. When an application relies on the underlying infrastructure to be 100% available, the gravity imposed by the infrastructure ties applications to specific data center technology to keep it running. However, OpenStack can be configured to introduce shared storage such as Ceph (http://ceph.com/) to allow for operational features such as live-migration (the ability to move running instances from one hypervisor to another with no downtime), allowing enterprise users move their applications to a cloud environment in a very safe way. These concepts will be discussed in more detail in later chapters on compute and storage. As such, the reference architecture for a compute node is to expect virtual machines to run locally on the hard drives in the server itself.

With regard to networking, like the controllers, the network must also be configured to be highly available. A compute node that has no network available might be very secure, but it would be equally useless to a cloud environment! Configure bonded interfaces in the same way as the controllers. Further information for configuring bonded interfaces under Ubuntu can be found in Appendix A.

Storage

Storage in OpenStack refers to block storage and object storage. Block storage (providing LUNs or hard drives to virtual machines) is provided by the Cinder service, while object storage (API driven object or blobs of storage) is provided by Swift or Ceph. Swift and Ceph manage each individual drive in a server designated as an object storage node, very much like a RAID card manages individual drives in a typical server. Each drive is an independent entity that Swift or Ceph uses to write data to. For example, if a storage node has 24 x 2.5in SAS disks in, Swift or Ceph will be configured to write to any one of those 24 disks. Cinder, however, can use a multitude of backends to store data. For example, Cinder can be configured to communicate with third-party vendors such as NetApp or Solidfire arrays, or it can be configured to talk to Sheepdog or Ceph, as well as the simplest of services such as LVM. In fact, OpenStack can be configured in such a way that Cinder uses multiple backends so that a user is able to choose the storage applicable to the service they require. This gives great flexibility to both end users and operators as it means workloads can be targeted at specific backends suitable for that workload or storage requirement.

This book briefly covers Ceph as the backend storage engine for Cinder. Ceph is a very popular, highly available open source storage service. Ceph has its own disk requirements to give the best performance. Each of the Ceph storage nodes in the preceding diagram are referred to as Ceph OSDs (Ceph Object Storage Daemons). We recommend starting with 5 of these nodes, although this is not a hard and fast rule. Performance tuning of Ceph is beyond the scope of this book, but at a minimum, we would highly recommend having SSDs for Ceph journaling and either SSD or SAS drives for the OSDs (the physical storage units).

The differences between a Swift node and a Ceph node in this architecture are very minimal. Both require an interface (bonded for resilience) for replication of data in the storage cluster, as well as an interface (bonded for resilience) used for data reads and writes from the client or service consuming the storage.

The primary difference is the recommendation to use SSDs (or NVMe) as the journaling disks.

Load balancing

The end users of the OpenStack environment expect services to be highly available, and OpenStack provides REST API services to all of its features. This makes the REST API services very suitable for placing behind a load balancer. In most deployments, load balancers would usually be highly available hardware appliances such as F5. For the purpose of this book, we will be using HAProxy. The premise behind this is the same though—to ensure that the services are available so your end users can continue working in the event of a failed controller node.

OpenStack-Ansible installation requirements

Operating installing System: Ubuntu 16.04 x86_64

Minimal data center deployment requirements

For a physical installation, the following will be needed:

- Controller servers (also known as infrastructure nodes)

- At least 64 GB RAM

- At least 300 GB disk (RAID)

- 4 Network Interface Cards (for creating two sets of bonded interfaces; one would be used for infrastructure and all API communication, including client, and the other would be dedicated to OpenStack networking: Neutron)

- Shared storage, or object storage service, to provide backend storage for the base OS images used

- Compute servers

- At least 64 GB RAM

- At least 600 GB disk (RAID)

- 4 Network Interface Cards (for creating two sets of bonded interfaces, used in the same way as the controller servers)

- Optional (if using Ceph for Cinder) 5 Ceph Servers (Ceph OSD nodes)

- At least 64 GB RAM

- 2 x SSD (RAID1) 400 GB for journaling

- 8 x SAS or SSD 300 GB (No RAID) for OSD (size up requirements and adjust accordingly)

- 4 Network Interface Cards (for creating two sets of bonded interfaces; one for replication and the other for data transfer in and out of Ceph)

- Optional (if using Swift) 5 Swift Servers

- At least 64 GB RAM

- 8 x SAS 300 GB (No RAID) (size up requirements and adjust accordingly)

- 4 Network Interface Cards (for creating two sets of bonded interfaces; one for replication and the other for data transfer in and out of Swift)

- Load balancers

- 2 physical load balancers configured as a pair

- Or 2 servers running HAProxy with a Keepalived VIP to provide as the API endpoint IP address:

- At least 16 GB RAM

- HAProxy + Keepalived

- 2 Network Interface Cards (bonded)

Note

Tip: Setting up a physical home lab? Ensure you have a managed switch so that interfaces can have VLANs tagged.

Host network configuration

Installation of OpenStack using an orchestration and configuration tool such as Ansible performs a lot of tasks that would otherwise have to be undertaken manually. However, we can only use an orchestration tool if the servers we are deploying to are configured in a consistent way and described to Ansible.

The following section will describe a typical server setup that uses two sets of active/passive bonded interfaces for use by OpenStack. Ensure that these are cabled appropriately.

We assume that the following physical network cards are installed in each of the servers; adjust them to suit your environment:

p2p1andp2p2p4p1andp4p2

We assume that the host network is currently using p2p1. The host network is the basic network that each of the servers currently resides on, and it allows you to access each one over SSH. It is assumed that this network also has a default gateway configured, and allows internet access. There should be no other networks required at this point as the servers are currently unconfigured and are not running OpenStack services.

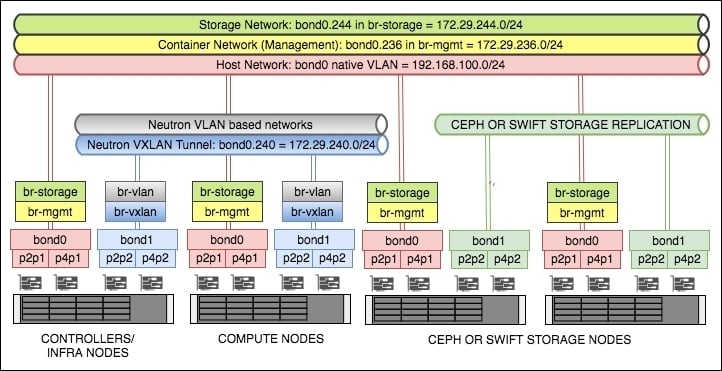

At the end of this section, we will have created the following bonded interfaces:

bond0: This consists of the physical interfacesp2p1andp4p1. Thebond0interface will be used for host, OpenStack management, and storage traffic.bond1: This consists of the physical interfacesp2p2andp4p2. Thebond1interface will be used for Neutron networking within OpenStack.

We will have created the following VLAN tagged interfaces:

bond0.236: This will be used for the container networkbond0.244: This will be used for the storage networkbond1.240: This will be used for the VXLAN tunnel network

And the following bridges:

br-mgmt: This will use thebond0.236VLAN interface, and will be configured with an IP address from the172.29.236.0/24range.br-storage: This will use thebond0.244VLAN interface, and will be configured with an IP address from the172.29.244.0/24range.br-vxlan: This will use thebond1.240 VLANinterface, and will be configured with an IP address from the172.29.240.0/24range.br-vlan: This will use the untaggedbond1interface, and will not have an IP address configured.

Note

Tip: Ensure that your subnets are large enough to support your current requirements as well as future growth!

The following diagram shows the networks, interfaces, and bridges set up before we begin our installation of OpenStack:

Getting ready

We assume that each server has Ubuntu 16.04 installed.

Log in, as root, onto each server that will have OpenStack installed.

How to do it…

Configuration of the host’s networking, on a Ubuntu system, is performed by editing the /etc/network/interfaces file.

- First of all, ensure that we have the right network packages installed on each server. As we are using VLANs and Bridges, the following packages must be installed:

apt update apt install vlan bridge-utilsCopy - Now edit the

/etc/network/interfacesfile on the first server using your preferred editor:vi /etc/network/interfacesCopy - We will first configure the bonded interfaces. The first part of the file will describe this. Edit this file so that it looks like the following to begin with:

# p2p1 + p4p1 = bond0 (used for host, container and storage) auto p2p1 iface p2p1 inet manual bond-master bond0 bond-primary p2p1 auto p4p1 iface p4p1 inet manual bond-master bond0 # p2p2 + p4p2 = bond1 (used for Neutron and Storage Replication) auto p2p2 iface p2p2 inet manual bond-master bond1 bond-primary p2p2 auto p4p2 iface p4p2 inet manual bond-master bond1Copy - Now we will configure the VLAN interfaces that are tagged against these bonds. Continue editing the file to add in the following tagged interfaces. Note that we are not assigning IP addresses to the OpenStack bonds just yet:

# We're using bond0 on a native VLAN for the 'host' network. # This bonded interface is likely to replace the address you # are currently using to connect to this host. auto bond0 iface bond0 inet static address 192.168.100.11 netmask 255.255.255.0 gateway 192.168.100.1 dns-nameserver 192.168.100.1 # Update to suit/ensure you can resolve DNS auto bond0.236 # Container VLAN iface bond0.236 inet manual auto bond1.240 # VXLAN Tunnel VLAN iface bond1.240 inet manual auto bond0.244 # Storage (Instance to Storage) VLAN iface bond0.244 inet manualCopyNoteTip: Use appropriate VLANs as required in your own environment. The VLAN tags used here are for reference only.Ensure that the correct VLAN tag is configured against the correct bonded interface.bond0is for host-type traffic,bond1is predominantly for Neutron-based traffic, except for storage nodes, where it is then used for storage replication. - We will now create the bridges, and place IP addresses on here as necessary (note that

br-vlandoes not have an IP address assigned). Continue editing the same file and add in the following lines:# Container bridge (br-mgmt) auto br-mgmt iface br-mgmt inet static address 172.29.236.11 netmask 255.255.255.0 bridge_ports bond0.236 bridge_stp off # Neutron's VXLAN bridge (br-vxlan) auto br-vxlan iface br-vxlan inet static address 172.29.240.11 netmask 255.255.255.0 bridge_ports bond1.240 bridge_stp off # Neutron's VLAN bridge (br-vlan) auto br-vlan iface br-vlan inet manual bridge_ports bond1 bridge_stp off # Storage Bridge (br-storage) auto br-storage iface br-storage inet static address 172.29.244.11 netmask 255.255.255.0 bridge_ports bond0.244 bridge_stp offCopyNoteThese bridge names are referenced in the OpenStack-Ansible configuration file, so ensure you name them correctly.Be careful in ensuring that the correct bridge is assigned to the correct bonded interface. - Save and exit the file, then issue the following command:

restart networkingCopy - As we are configuring our OpenStack environment to be as highly available as possible, it is suggested that you also reboot your server at this point to ensure the basic server, with redundant networking in place, comes back up as expected:

rebootCopy - Now repeat this for each server on your network.

- Once all the servers are done, ensure that your servers can communicate with each other over these newly created interfaces and subnets. A test like the following might be convenient:

apt install fping fping -a -g 172.29.236.0/24 fping -a -g 172.29.240.0/24 fping -a -g 172.29.244.0/24Copy

Note

Tip: We also recommend that you perform a network cable unplugging exercise to ensure that the failover from one active interface to another is working as expected.

How it works…

We have configured the physical networking of our hosts to ensure a good known state and configuration for running OpenStack. Each of the interfaces configured here is specific to OpenStack—either directly managed by OpenStack (for example, br-vlan) or used for inter-service communication (for example, br-mgmt). In the former case, OpenStack utilizes the br-vlan bridge and configures tagged interfaces on bond1 directly.

Note that the convention used here, of VLAN tag ID using a portion of the subnet, is only to highlight a separation of VLANs to specific subnets (for example, bond0.236 is used by the 172.29.236.0/24 subnet). This VLAN tag ID is arbitrary, but must be set up in accordance with your specific networking requirements.

Finally, we performed a fairly rudimentary test of the network. This gives you the confidence that the network configuration that will be used throughout the life of your OpenStack cloud is fit for purpose and gives assurances in the event of a failure of a cable or network card.

Root SSH keys configuration

Ansible is designed to help system administrators drive greater efficiency in the datacenter by being able to configure and operate many servers using orchestration playbooks. In order for Ansible to be able to fulfill its duties, it needs an SSH connection on the Linux systems it is managing. Furthermore, in order to have a greater degree of freedom and flexibility, a hands-off approach using SSH public private key pairs is required.

As the installation of OpenStack is expected to run as root, this stage expects the deployment host’s root public key to be propagated across all servers.

Getting ready

Ensure that you are root on the deployment host. In most cases, this is the first infrastructure controller node that we have named for the purposes of this book to be called infra01. We will be assuming that all Ansible commands will be run from this host, and that it expects to be able to connect to the rest of the servers on this network over the host network via SSH.

How to do it…

In order to allow a hands-free, orchestrated OpenStack-Ansible deployment, follow these steps to create and propagate root SSH public key of infra01 across all servers required of the installation:

- As root, execute the following command to create an SSH key pair:

ssh-keygen -t rsa -f ~/.ssh/id_rsa -N ""CopyThe output should look similar to this:Generating public/private rsa key pair. Your identification has been saved in /root/.ssh/id_rsa. Your public key has been saved in /root/.ssh/id_rsa.pub. The key fingerprint is: SHA256:q0mdqJI3TTFaiLrMaPABBboTsyr3pRnCaylLU5WEDCw root@infra01 The key's randomart image is: +---[RSA 2048]----+ |ooo .. | |E..o. . | |=. . + | |.=. o + | |+o . o oS | |+oo . .o | |B=++.o+ + | |*=B+oB.o | |o+.o=.o | +----[SHA256]-----+Copy - This has created two files in

/root/.ssh, calledid_rsaandid_rsa.pub. The file,id_rsais the private key, and must not be copied across the network. It is not required to be anywhere other than on this server. The file,id_rsa.pub, is the public key and can be shared to other servers on the network. If you have other nodes (for example, named infra02), use the following to copy this key to that node in your environment:ssh-copy-id root@infra02CopyNoteTip: Ensure that you can resolveinfra02and the other servers, else amend the preceding command to use its host IP address instead. - Now repeat step 2 for all servers on your network.

- Important: finally, ensure that you execute the following command to be able to SSH to itself:

ssh-copy-id root@infra01Copy - Test that you can

ssh, as the root user, frominfra01to other servers on your network. You should be presented with a Terminal ready to accept commands if successful, without being prompted for a passphrase. Consult/var/log/auth.logon the remote server if this behavior is incorrect.

How it works…

We first generated a key pair file for use by SSH. The -t option specified the rsa type encryption, -f specified the output of the private key, where the public portion will get .pub appended to its name, and -N "" specified that no passphrase is to be used on this key. Consult your own security standards if the presented options differ from your company’s requirements.

Installing Ansible, playbooks, and dependencies

In order for us to successfully install OpenStack using Ansible, we need to ensure that Ansible and any expected dependencies are installed on the deployment host. The OpenStack-Ansible project provides a handy script to do this for us, which is part of the version of OpenStack-Ansible we will be deploying.

Getting ready

Ensure that you are root on the deployment host. In most cases, this is the first infrastructure controller node, infra01.

At this point, we will be checking out the version of OpenStack-Ansible from GitHub.

How to do it…

To set up Ansible and its dependencies, follow these steps:

- We first need to use

gitto check out the OpenStack-Ansible code from GitHub, so ensure that the following packages are installed (among other needed dependencies):apt update apt install git python-dev bridge-tools lsof lvm2 tcpdump build- essential ntp ntpdate python-dev libyaml-dev libpython2.7-dev libffi-dev libssl-dev python-crypto python-yamlCopy - We then need to grab the OpenStack-Ansible code from GitHub. At the time of writing, the Pike release branch (16.X) is described as follows, but the steps remain the same for the foreseeable future. It is recommended that you use the latest stable tag by visiting https://github.com/openstack/openstack-ansible/tags. Here we’re using the latest 16 (Pike) tag denoted by

16.0.5:NoteTip: To use a branch of the Queens release, use the following:-b 17.0.0. When the Rocky release is available, use-b 18.0.0.git clone -b 16.0.5 https://github.com/openstack/openstack-ansible.git /opt/openstack-ansibleCopy - Ansible and the needed dependencies to successfully install OpenStack can be found in the

/opt/openstack-ansible/scriptsdirectory. Issue the following command to bootstrap the environment:cd /opt/openstack-ansible scripts/boot strap-ansible.shCopy

How it works…

The OpenStack-Ansible project provides a handy script to ensure that Ansible and the right dependencies are installed on the deployment host. This script (bootstrap-ansible.sh) lives in the scripts/ directory of the checked out OpenStack-Ansible code, so at this stage we need to grab the version we want to deploy using Git. Once we have the code, we can execute the script and wait for it to complete.

There’s more…

Visit https://docs.openstack.org/project-deploy-guide/openstack-ansible/latest for more information.

Configuring the installation

OpenStack-Ansible is a set of official Ansible playbooks and roles that lay down OpenStack with minimal prerequisites. Like any orchestration tool, most effort is done up front with configuration, followed by a hands-free experience when the playbooks are running. The result is a tried and tested OpenStack installation suitable for any size environment, from testing to production environments.

When we use OpenStack-Ansible, we are basically downloading the playbooks from GitHub onto a nominated deployment server. A deployment server is the host that has access to all the machines in the environment via SSH (and for convenience, and for the most seamless experience without hiccups, via keys). This deployment server can be one of the machines you’ve nominated as a part of your OpenStack environment as Ansible isn’t anything that takes up any ongoing resources once run.

Note

Tip: Remember to back up the relevant configuration directories related to OpenStack-Ansible before you rekick an install of Ubuntu on this server!

Getting ready

Ensure that you are root on the deployment host. In most cases, this is the first infrastructure controller node, infra01.

How to do it…

Let’s assume that you’re using the first infrastructure node, infra01, as the deployment server.

If you have not followed the preceding Installing Ansible, playbooks, and dependencies recipe review, then as root, carry out the following if necessary:

git clone -b 16.05 https://github.com/openstack/openstack-ansible.git /opt/openstack-ansible

This downloads the OpenStack-Ansible playbooks to the /opt/openstack-ansible directory.

To configure our OpenStack deployment, carry out the following steps:

- We first copy the

etc/openstack_deployfolder out of the downloaded repository to/etc:cd /opt/openstack-ansible cp -R /etc/openstack_deploy /etcCopy - We now have to tell Ansible which servers will do which OpenStack function, by editing the

/etc/openstack_deploy/openstack_user_config.ymlfile, as shown here:cp /etc/openstack_deploy/openstack_user_variables.yml.example /etc/openstack_deploy_openstack_user_variables.yml vi /etc/openstack_deploy/openstack_user_variables.ymlCopy - The first section,

cidr_networks, describes the subnets used by OpenStack in this installation. Here we describe the container network (each of the OpenStack services are run inside a container, and this has its own network so that each service can communicate with each other). We describe the tunnel network (when a user creates a tenant network in this installation of OpenStack, this will create a segregated VXLAN network over this physical network). Finally, we describe the storage network subnet. Edit this file so that it looks like the following:cidr_networks: container: 172.29.236.0/24 tunnel: 172.29.240.0/24 storage: 172.29.244.0/24Copy - Continue editing the file to include any IP addresses that are already used by existing physical hosts in the environment where OpenStack will be deployed (and ensuring that you’ve included any reserved IP addresses for physical growth too). Include the addresses we have already configured leading up to this section. Single IP addresses or ranges (start and end placed either side of a ‘,’) can be placed here. Edit this section to look like the following, adjust as per your environment and any reserved IPs:

used_ips: - "172.29.236.20" - "172.29.240.20" - "172.29.244.20" - "172.29.236.101,172.29.236.117" - "172.29.240.101,172.29.240.117" - "172.29.244.101,172.29.244.117" - "172.29.248.101,172.29.248.117"Copy - The

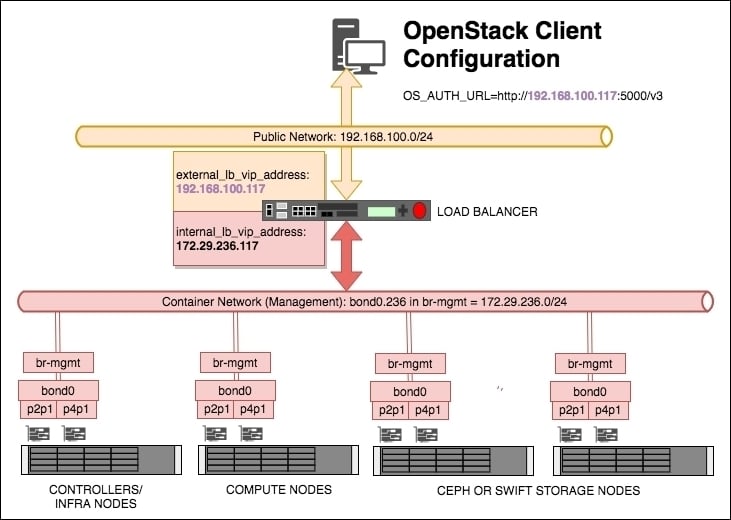

global_overridessection describes the bridges and other specific details of the interfaces used environment—particularly pertaining to how the container network attaches to the physical network interfaces. For the example architecture used in this book, the following output can be used. In most cases, the content in this section doesn’t need to be edited apart from the load balancer information at the start, so edit to suit:global_overrides: internal_lb_vip_address: 172.29.236.117 external_lb_vip_address: 192.168.100.117 lb_name: haproxy tunnel_bridge: "br-vxlan" management_bridge: "br-mgmt" provider_networks: - network: group_binds: - all_containers - hosts type: "raw" container_bridge: "br-mgmt" container_interface: "eth1" container_type: "veth" ip_from_q: "management" is_container_address: true is_ssh_address: true - network: group_binds: - neutron_linuxbridge_agent container_bridge: "br-vxlan" container_type: "veth" container_interface: "eth10" ip_from_q: "tunnel" type: "vxlan" range: "1:1000" net_name: "vxlan" - network: group_binds: - neutron_linuxbridge_agent container_bridge: "br-vlan" container_type: "veth" container_interface: "eth11" type: "vlan" range: "1:1" net_name: "vlan"Copy - The remaining section of this file describes which server each service runs from. Most of the sections repeat, differing only in the name of the service. This is fine as the intention here is to tell OpenStack-Ansible which server (we give it a name so that Ansible can refer to it by name, and reference the IP associated with it) runs the Nova API, RabbitMQ, or the Glance service, for example. As these particular example services run on our controller nodes, and in a production setting there are at least three controllers, you can quickly see why this information repeats. Other sections refer specifically to other services, such as OpenStack compute. For brevity, a couple of sections are shown here, but continue editing the file to match your networking:

# Shared infrastructure parts shared-infra_hosts: controller-01: ip: 172.29.236.110 controller-02: ip: 172.29.236.111 controller-03: ip: 172.29.236.112 # Compute Hosts compute_hosts: compute-01: ip: 172.29.236.113 compute-02: ip: 172.29.236.114Copy - Save and exit the file. We will now need to generate some random passphrases for the various services that run in OpenStack. In OpenStack, each service—such as Nova, Glance, and Neutron (which are described through the book)—themselves have to authenticate with Keystone, and be authorized to act as a service. To do so, their own user accounts need to have passphrases generated. Carry out the following command to generate the required passphrases, which would be later used when the OpenStack playbooks are executed:

cd /opt/openstack-ansible/scripts python pw-token-gen.py --file /etc/openstack_deploy/user_secrets.ymlCopy - Finally, there is another file that allows you to fine-tune the parameters of the OpenStack services, such as which backing store Glance (the OpenStack Image service) will be using, as well as configure proxy services ahead of the installation. This file is called

/etc/openstack_deploy/user_variables.yml. Let’s view and edit this file:cd /etc/openstack_deploy vi user_variables.ymlCopy - In a typical, highly available deployment—one in which we have three controller nodes—we need to configure Glance to use a shared storage service so that each of the three controllers have the same view of a filesystem, and therefore the images used to spin up instances. A number of shared storage backend systems that Glance can use range from NFS to Swift. We can even allow a private cloud environment to connect out over a public network and connect to a public service like Rackspace Cloud Files. If you have Swift available, add the following lines to

user_variables.ymlto configure Glance to use Swift:glance_swift_store_auth_version: 3 glance_default_store: swift glance_swift_store_auth_address: http://172.29.236.117:5000/v3 glance_swift_store_container: glance_images glance_swift_store_endpoint_type: internalURL glance_swift_store_key: '{{ glance_service_password }}' glance_swift_store_region: RegionOne glance_swift_store_user: 'service:glance'CopyNoteTip: Latest versions of OpenStack-Ansible are smart enough to discover if Swift is being used and will update their configuration accordingly. - View the other commented out details in the file to see if they need editing to suit your environment, then save and exit. You are now ready to start the installation of OpenStack!

How it works…

Ansible is a very popular server configuration tool that is well-suited to the task of installing OpenStack. Ansible takes a set of configuration files that Playbooks (a defined set of steps that get executed on the servers) use to control how they executed. For OpenStack-Ansible, configuration is split into two areas: describing the physical environment and describing how OpenStack is configured.

The first configuration file, /etc/openstack_deploy/openstack_user_config.yml, describes the physical environment. Each section is described here:

cidr_networks:

container: 172.29.236.0/24

tunnel: 172.29.240.0/24

storage: 172.29.244.0/24This section describes the networks required for an installation based on OpenStack-Ansible. Look at the following diagram to see the different networks and subnets.

- Container: Each container that gets deployed gets an IP address from this subnet. The load balancer also takes an IP address from this range.

- Tunnel: This is the subnet that forms the VXLAN tunnel mesh. Each container and compute host that participates in the VXLAN tunnel gets an IP from this range (the VXLAN tunnel is used when an operator creates a Neutron subnet that specifies the

vxlantype, which creates a virtual network over this underlying subnet). Refer to Chapter 4, Neutron – OpenStack Networking for more details on OpenStack networking. - Storage: This is the subnet that was used when a client instance spun up in OpenStack request Cinder block storage:

used_ips: - "172.29.236.20" - "172.29.240.20" - "172.29.244.20" - "172.29.236.101,172.29.236.117" - "172.29.240.101,172.29.240.117" - "172.29.244.101,172.29.244.117" - "172.29.248.101,172.29.248.117"Copy

The used_ips: section refers to IP addresses that are already in use on that subnet, or reserved for use by static devices. Such devices are load balancers or other hosts that are part of the subnets that OpenStack-Ansible would otherwise have randomly allocated to containers:

global_overrides:

internal_lb_vip_address: 172.29.236.117

external_lb_vip_address: 192.168.1.117

tunnel_bridge: "br-vxlan"

management_bridge: "br-mgmt"

storage_bridge: "br-storage"The global_overrides: section describes the details around how the containers and bridged networking are set up. OpenStack-Ansible’s default documentation expects Linux Bridge to be used; however, Open vSwitch can also be used. Refer to Chapter 4, Neutron – OpenStack Networking, for more details.

The internal_lb_vip_address: and external_lb_vip_address: sections refer to the private and public sides of a typical load balancer. The private (internal_lb_vip_address) is used by the services within OpenStack (for example, Nova calls communicating with the Neutron API would use internal_lb_vip_address, whereas a user communicating with the OpenStack environment once it has been installed would use external_lb_vip_address). See the following diagram:

A number of load balance pools will be created for a given Virtual IP (VIP) address, describing the IP addresses and ports associated with a particular service, and for each pool—one will be created on the public/external network (in the example, a VIP address of 192.168.100.117 has been created for this purpose), and another VIP for use internally by OpenStack (in the preceding example, the VIP address 172.29.236.117 has been created for this purpose).

The tunnel_bridge: section is the name given to the bridge that is used for attaching the physical interface that participates in the VXLAN tunnel network.

The management_bridge: section is the name given to the bridge that is used for all of the OpenStack services that get installed on the container network shown in the diagram.

The storage_bridge: section is the name given to the bridge that is used for traffic associated with attaching storage to instances or where Swift proxied traffic would flow.

Each of the preceding bridges must match the names you have configured in the /etc/network/interfaces file on each of your servers.

The next section, provider_networks, remains relatively static and untouched as it describes the relationship between container networking and the physical environment. Do not adjust this section.

Following the provider_networks section are the sections describing which server or group of servers run a particular service. Each block has the following syntax:

service_name:

ansible_inventory_name_for_server:

IP_ADDRESS

ansible_inventory_name_for_server:

IP_ADDRESSNote

Tip: Ensure the correct and consistent spelling of each server name (ansible_inventory_name_for_server) to ensure correct execution of your Ansible playbooks.

A number of sections and their use are listed here:

shared-infra_hosts: This supports the shared infrastructure software, which is MariaDB/Galera and RabbitMQrepo-infra_hosts: This is the specific repository containers version of OpenStack-Ansible requestedhaproxy_hosts: When using HAProxy for load balancing, this tells the playbooks where to install and configure this serviceos-infra_hosts: These include OpenStack API services such as Nova API and Glance APIlog_hosts: This is where the rsyslog server runs fromidentity_hosts: These are the servers that run the Keystone (OpenStack Identity) Servicestorage-infra_hosts: These are the servers that run the Cinder API servicestorage_hosts: This is the section that describes Cinder LVM nodesswift-proxy_hosts: These are the hosts that would house the Swift Proxy serviceswift_hosts: These are the Swift storage nodescompute_hosts: This is the list of servers that make up your hypervisorsimage_hosts: These are the servers that run the Glance (OpenStack Image) Serviceorchestration_hosts: These are the servers that run the Heat API (OpenStack Orchestration) servicesdashboard_hosts: These are the servers that run the Horizon (OpenStack Dashboard) servicenetwork_hosts: These are the servers that run the Neutron (OpenStack Networking) agents and servicesmetering-infra_hosts: These are the servers that run the Ceilometer (OpenStack Telemetry) servicemetering-alarm_hosts: These are the servers that run the Ceilometer (OpenStack Telemetry) service associated with alarmsmetrics_hosts: The servers that run the Gnocchi component of Ceilometermetering-compute_hosts: When using Ceilometer, these are the list of compute hosts that need the metering agent installed

Running the OpenStack-Ansible playbooks

To install OpenStack, we simply run the relevant playbooks. There are three main playbooks in total that we will be using:

setup-hosts.ymlsetup-infrastructure.ymlsetup-openstack.yml

Getting ready

Ensure that you are the root user on the deployment host. In most cases, this is the first infrastructure controller node, infra01.

How to do it…

To install OpenStack using the OpenStack-Ansible playbooks, you navigate to the playbooks directory of the checked out Git repository, then execute each playbook in turn:

- First change to the

playbooksdirectory by executing the following command:cd /opt/openstack-ansible/playbooksCopy - The first step is to run a syntax check on your scripts and configuration. As we will be executing three playbooks, we will execute the following against each:

openstack-ansible setup-hosts.yml --syntax-check openstack-ansible setup-infrastructure.yml --syntax-check openstack-ansible setup-openstack.yml --syntax-checkCopy - Now we will run the first playbook using a special OpenStack-Ansible wrapper script to Ansible that configures each host that we described in the

/etc/openstack_deploy/openstack_user_config.ymlfile:openstack-ansible setup-hosts.ymlCopy - After a short while, you should be greeted with a PLAY RECAP output that is all green (with yellow/blue lines indicating where any changes were made), with the output showing all changes were OK. If there are issues, review the output by scrolling back through the output and watch out for any output that was printed out in red. Refer to the Troubleshooting the installation recipe further on in this chapter. If all is OK, we can proceed to run the next playbook for setting up load balancing. At this stage, it is important that the load balancer gets configured. OpenStack-Ansible installs the OpenStack services in LXC containers on each server, and so far we have not explicitly stated which IP address on the container network will have that particular service installed. This is because we let Ansible manage this for us. So while it might seem counter-intuitive to set up load balancing at this stage before we know where each service will be installed—Ansible has already generated a dynamic inventory ahead of any future work, so Ansible already knows how many containers are involved and knows which container will have that service installed. If you are using an F5 LTM, Brocade, or similar enterprise load balancing kit, it is recommended that you use HAProxy temporarily and view the generated configuration to be manually transferred to a physical setup. To temporarily set up HAProxy to allow an installation of OpenStack to continue, modify your

openstack_user_config.ymlfile to include a HAProxy host, then execute the following:openstack-ansible install-haproxy.ymlCopy - If all is OK, we can proceed to run the next Playbook that sets up the shared infrastructure services as follows:

openstack-ansible setup-infrastructure.ymlCopy - This step takes a little longer than the first Playbook. As before, inspect the output for any failures. At this stage, we should have a number of containers running on each Infrastructure Node (also known and referred to as Controller Nodes). On some of these containers, such as the ones labelled Galera or RabbitMQ, we should see services running correctly on here, waiting for OpenStack to be configured against them. We can now continue the installation by running the largest of the playbooks—the installation of OpenStack itself. To do this, execute the following command:

openstack-ansible setup-openstack.ymlCopy - This may take a while to run—running to hours—so be prepared for this duration by ensuring your SSH session to the deployment host will not be interrupted after a long time, and safeguard any disconnects by running the Playbook in something like

tmuxorscreen. At the end of the Playbook run, if all is OK, congratulations, you have OpenStack installed!

How it works…

Installation of OpenStack using OpenStack-Ansible is conducted using a number of playbooks. The first playbook, setup-hosts.yml, sets up the hosts by laying down the container configurations. At this stage, Ansible knows where it will be placing all future services associated with OpenStack, so we use the dynamic inventory information to perform an installation of HAProxy and configure it for all the services used by OpenStack (that are yet to be installed). The next playbook, setup-infrastructure.yml, configures and installs the base Infrastructure services containers that OpenStack expects to be present, such as Galera. The final playbook is the main event—the playbook that installs all the required OpenStack services we specified in the configuration. This runs for quite a while—but at the end of the run, you are left with an installation of OpenStack.

The OpenStack-Ansible project provides a wrapper script to the ansible command that would ordinarily run to execute Playbooks. This is called openstack-ansible. In essence, this ensures that the correct inventory and configuration information is passed to the ansible command to ensure correct running of the OpenStack-Ansible playbooks.

Troubleshooting the installation

Ansible is a tool, written by people, that runs playbooks, written by people, to configure systems that would ordinarily be manually performed by people, and as such, errors can occur. The end result is only as good as the input.

Typical failures either occur quickly, such as connection problems, and will be relatively self-evident, or after long running jobs that may be as a result of load or network timeouts. In any case, the OpenStack-Ansible playbooks provide an efficient mechanism to rerun playbooks without having to repeat the tasks it has already completed.

On failure, Ansible produces a file in /root (as we’re running these playbooks as root) called the playbook name, with the file extension of .retry. This file simply lists the hosts that had failed so this can be referenced when running the playbook again. This targets the single or small group of hosts, which is far more efficient than a large cluster of machines that successfully completed.

How to do it…

We will step through a problem that caused one of the playbooks to fail.

Note the failed playbook and then invoke it again with the following steps:

- Ensure that you’re in the

playbooksdirectory as follows:cd /opt/openstack-ansible/playbooksCopy - Now rerun that Playbook, but specify the

retryfile:ansible-openstack setup-openstack.yml --retry /root/setup-openstack.retryCopy - In most situations, this will be enough to rectify the situation, however, OpenStack-Ansible has been written to be idempotent—meaning that the whole playbook can be run again, only modifying what it needs to. Therefore, you can run the Playbook again without specifying the

retryfile.

Should there be a failure at this first stage, execute the following:

- First remove the generated

inventoryfiles:rm -f /etc/openstack_deploy/openstack_inventory.json rm -f /etc/openstack_deploy/openstack_hostnames_ips.ymlCopy - Now rerun the

setup-hosts.ymlplaybook:cd /opt/openstack-ansible/playbooks openstack-ansible setup-hosts.ymlCopy

In some situations, it might be applicable to destroy the installation and begin again. As each service gets installed in LXC containers, it is very easy to wipe an installation and start from the beginning. To do so, carry out the following steps:

- We first destroy all of the containers in the environment:

cd /opt/openstack-ansible/playbooks openstack-ansible lxc-containers-destroy.ymlCopyYou will be asked to confirm this action. Follow the ons-screen prompts. - We recommend you to uninstall the following package to avoid any conflicts with the future running of the playbooks, and also clear out any remnants of containers on each host:

ansible hosts -m shell -a "pip uninstall -y appdirs"Copy - Finally, remove the inventory information:

rm -f /etc/openstack_deploy/openstack_inventory.json /etc/openstack_deploy/openstack_hostnames_ips.ymlCopy

How it works…

Ansible is not perfect and so are computers. Sometimes failures occur in the environment due to SSH timeouts, or some other transient failure. Also, despite Ansible trying its best to retry the execution of a playbook, the result might be a failure. Failure in Ansible is quite obvious—it is usually predicated by outputs of red text on the screen. In most cases, rerunning the offending playbook may get over some transient problems. Each playbook runs a specific task, and Ansible will state which task has failed. Troubleshooting why that particular task had failed will eventually lead to a good outcome. Worst case, you can reset your installation from the beginning.

Manually testing the installation

Once the installation has completed successfully, the first step is to test the install. Testing OpenStack involves both automated and manual checks.

Manual tests verify user-journeys that may not normally be picked up through automated testing, such as ensuring horizon is displayed properly.

Automated tests can be invoked using a testing framework such as tempest or the OpenStack benchmarking tool—rally.

Getting ready

Ensure that you are root on the first infrastructure controller node, infra01.

How to do it…

The installation of OpenStack-Ansible creates several utility containers on each of the infra nodes. These utility hosts provide all the command-line tools needed to try out OpenStack, using the command line of course. Carry out the following steps to get access to a utility host and run various commands in order to verify an installation of OpenStack manually:

- First, view the running containers by issuing the following command:

lxc-ls -fCopy - As you can see, this lists a number of containers because the OpenStack-Ansible installation uses isolated Linux containers for running each service. By the side of each one its IP address and running state will be listed. You can see here that the container network of

172.29.236.0/24was used in this chapter and why this was named this way. One of the containers on here is the utility container, named with the following format:nodename_utility_container_randomuuid. To access this container, you can SSH to it, or you can issue the following command:lxc-attach -n infra01_utility_container_34u477dCopy - You will now be running a Terminal inside this container, with access only to the tools and services belonging to that containers. In this case, we have access to the required OpenStack clients. The first thing you need to do is source in your OpenStack credentials. The OpenStack-Ansible project writes out a generated bash environment file with an

adminuser and project that was set up during the installation. Load this into your bash environment with the following command:source openrcCopyNoteTip: you can also use the following syntax in Bash: .openrc - Now you can use the OpenStack CLI to view the services and status of the environment, as well as create networks, and launch instances. A few handy commands are listed here:

openstack server list openstack network list openstack endpoint list openstack network agent listCopy

How it works…

The OpenStack-Ansible method of installing OpenStack installs OpenStack services into isolated containers on our Linux servers. On each of the controller (or infra) nodes are about 12 containers, each running a single service such as nova-api or RabbitMQ. You can view the running containers by logging into any of the servers as root and issuing a lxc-ls -f command. The -f parameter gives you a full listing showing the status of the instance such as whether it is running or stopped.

One of the containers on the infra nodes has utility in its name, and this is known as a utility container in OpenStack-Ansible terminology. This container has OpenStack client tools installed, which makes it a great place to start manually testing an installation of OpenStack. Each container has at least an IP address on the container network—in the example used in this chapter this is the 172.29.236.0/24 subnet. You can SSH to the IP address of this container, or use another lxc command to attach to it: lxc-attach -n <name_of_container>. Once you have a session inside the container, you can use it like any other system, provided those tools are available to the restricted four-walls of the container. To use OpenStack commands, however, you first need to source the resource environment file which is named openrc. This is a normal bash environment file that has been prepopulated during the installation and provides all the required credentials needed to use OpenStack straight away.

Modifying the OpenStack configuration

It would be ludicrous to think that all of the playbooks would be needed to run again for a small change such as changing the CPU contention ratio from 4:1 to 8:1. So instead, the playbooks have been developed and tagged so that specific playbooks can be run associated with that particular project that would reconfigure and restart the associated services to pick up the changes.

Getting ready

Ensure that you are root on the deployment host. In most cases, this is the first infrastructure controller node, infra01.

How to do it…

The following are the common changes and how they can be changed using Ansible. As we’ll adjust the configuration, all of these commands are executed from the same host you used to perform the installation.

To adjust the CPU overcommit/allocation ratio, carry out the following steps:

- Edit the

/etc/openstack_deploy/user_variables.ymlfile and modify (or add) the following line (adjust the figure to suit):nova_cpu_allocation_ratio: 8.0Copy - Now execute the following commands to make changes in the environment:

cd /opt/openstack-ansible/playbooks openstack-ansible os-nova-install.yml --tags 'nova-config'Copy

For more complex changes, for example, to add configuration that isn’t a simple one-line change in a template, we can use an alternative in the form of overrides. To make changes to the default Nova Quotas, carry out the following as an example:

- Edit the

/etc/openstack_deploy/user_variables.ymlfile and modify (or add) the following line (adjust the figure to suit):nova_nova_conf_overrides: DEFAULT: quota_fixed_ips = -1 quota_floating_ips = 20 quota_instances = 20Copy - Now execute the following commands to make changes in the environment:

cd /opt/openstack-ansible/playbooks openstack-ansible os-nova-install.yml --tag 'nova-config'Copy

Changes for Neutron, Glance, Cinder, and all other services are modified in a similar way. Adjust the name of the service in the syntax used. For example, to change a configuration item in the neutron.conf file, you would use the following syntax:

neutron_neutron_conf_overrides:

DEFAULT:

dhcp_lease_duration = -1Then execute the following commands:

cd /opt/openstack-ansible/playbooks

openstack-ansible os-neutron-install.yml --tag 'neutron-config'

How it works…

We modified the same OpenStack-Ansible configuration files as in the Configuring the installation recipe and executed the openstack-ansible playbook command, specifying the playbook that corresponded to the service we wanted to change. As we were making configuration changes, we notified Ansible of this through the --tag parameter.

Refer to https://docs.openstack.org/ for all configuration options for each service.

Virtual lab – vagrant up!

In an ideal world, each of us would have access to physical servers and the network kit in order to learn, test, and experiment with OpenStack. However, most of the time this isn’t the case. By using an orchestrated virtual lab, using Vagrant and VirtualBox, allows you to experience this chapter on OpenStack-Ansible using your laptop.

The following Vagrant lab can be found at http://openstackbook.online/.

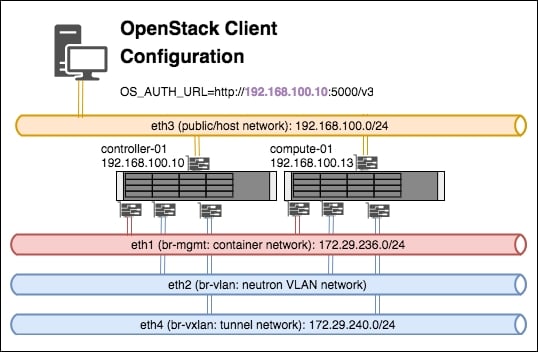

This is the architecture of the Vagrant-based OpenStack environment:

Essentially there are three virtual machines that are created (a controller node, a compute node and a client machine), and each host has four network cards (plus an internal bridged interface used by VirtualBox itself). The four network cards represent the networks described in this chapter:

- Eth1: This is included in the

br-mgmtbridge, and used by the container network - Eth2: This is included in the

br-vlanbridge, and used when a VLAN-based Neutron network is created once OpenStack is up and running - Eth3: This is the client or host network—the network we would be using to interact with OpenStack services (for example, the public/external side of the load balancer)

- Eth4: This is included in the

br-vxlanbridge, and used when a VXLAN-based Neutron overlay network is created once OpenStack is up and running

Note that the virtual machine called openstack-client, which gets created in this lab, provides you with all the command-line tools to conveniently get you started with working with OpenStack.

Getting ready

In order to run a multi-node OpenStack environment, running as a virtual environment on your laptop or designated host, the following set of requirements are needed:

- A Linux, Mac, or Windows desktop, laptop or server. The authors of this book use macOS and Linux, with Windows as the host desktop being the least tested configuration.

- At least 16GB RAM. 24GB is recommended.

- About 50 GB of disk space. The virtual machines that provide the infra and compute nodes in this virtual environment are thin provisioned, so this requirement is just a guide depending on your use.

- An internet connection. The faster the better, as the installation relies on downloading files and packages directly from the internet.

How to do it…

To run the OpenStack environment within the virtual environment, we need a few programs installed, all of which are free to download and use: VirtualBox, Vagrant, and Git. VirtualBox provides the virtual servers representing the servers in a normal OpenStack installation; Vagrant describes the installation in a fully orchestrated way; Git allows us to check out all of the scripts that we provide as part of the book to easily test a virtual OpenStack installation. The following instructions describe an installation of these tools on Ubuntu Linux.

We first need to install VirtualBox if it is not already installed. We recommend downloading the latest available releases of the software. To do so on Ubuntu Linux as root, follow these steps:

- We first add the

virtualbox.orgrepository key with the following command:wget -q https://www.virtualbox.org/download/oracle_vbox_2016.asc -O- | sudo apt-key add –Copy - Next we add the repository file to our

aptconfiguration, by creating a file called/etc/apt/sources.list.d/virtualbox.confwith the following contents:deb http://download.virtualbox.org/virtualbox/debian xenial contribCopy - We now run an

apt updateto refresh and update theaptcache with the following command:apt updateCopy - Now install VirtualBox with the following command:

apt install virtualbox-5.1Copy

Once VirtualBox is installed, we can install Vagrant. Follow these steps to install Vagrant:

- Vagrant is downloaded from https://www.vagrantup.com/downloads.html. The version we want is Debian 64-Bit. At the time of writing, this is version 2.0.1. To download it on our desktop issue the following command:

wget https://releases.hashicorp.com/vagrant/2.0.1/vagrant_2.0.1_x86_64.debCopy - We can now install the file with the following command

dpkg -i ./vagrant_2.0.1_x86_64.debCopy

The lab utilizes two vagrant plugins: vagrant-hostmanager and vagrant-triggers. To install these, carry out the following steps:

- Install

vagrant-hostmanagerusing thevagranttool:vagrant plugin install vagrant-hostmanagerCopy - Install

vagrant-triggersusing thevagranttool:vagrant plugin install vagrant-triggersCopy

If Git is not currently installed, issue the following command to install git on a Ubuntu machine:

apt update

apt install git

Now that we have the required tools, we can use the OpenStackCookbook Vagrant lab environment to perform a fully orchestrated installation of OpenStack in a VirtualBox environment:

- We will first checkout the lab environment scripts and supporting files with

gitby issuing the following command:git clone https://github.com/OpenStackCookbook/vagrant-openstackCopy - We will change into the

vagrant-openstackdirectory that was just created:cd vagrant-openstackCopy - We can now orchestrate the creation of the virtual machines and installation of OpenStack using one simple command:

vagrant upCopy

Note

Tip: This will take quite a while as it creates the virtual machines and runs through all the same playbook steps described in this chapter.

How it works…

Vagrant is an awesome tool for orchestrating many different virtual and cloud environments. It allows us to describe what virtual servers need to be created, and using Vagrant’s provisioner allows us to run scripts once a virtual machine has been created.

Vagrant’s environment file is called Vagrantfile. You can edit this file to adjust the settings of the virtual machine, for example, to increase the RAM or number of available CPUs.

This allows us to describe a complete OpenStack environment using one command:

vagrant up

The environment consists of the following:

- A controller node,

infra-01 - A compute node,

compute-01 - A client virtual machine,

openstack-client

Once the environment has finished installing, you can use the environment by navigating to http://192.168.100.10/ in your web browser. To retrieve the admin password, follow the steps given here and view the file named openrc.

There is a single controller node that has a utility container configured for use in this environment. Attach to this with the following commands:

vagrant ssh controller-01

sudo -i

lxc-attach -n (lxc-ls | grep utility)

openrc

Once you have retrieved the openrc details, copy these to your openstack-client virtual machine. From here you can operate OpenStack, mimicking a desktop machine accessing an installation of OpenStack utilizing the command line.

vagrant ssh openstack-client

openrc

You should now be able to use OpenStack CLI tools to operate the environment.Previous ChapterEnd of Chapter 1Next Chapter